Introduction

1 million tokens is about 750,000 words in English. That is roughly 4 to 5 million characters, or around 1,500 to 2,000 pages of text, depending on formatting, language, and the tokenizer used by the AI model.

That number is an estimate, not a fixed rule. A token can be a whole word, part of a word, punctuation, whitespace, or a piece of code. This is why two documents with the same word count can produce different token counts once they are processed by an AI model.

The important point is simple: tokens are the unit AI models use to measure usage and calculate cost. If you are estimating API spend, chatbot usage, document processing, or long-context prompts, word count alone is not enough.

Once you think in tokens instead of pages, AI pricing becomes much easier to forecast. Tools like AI Token Calculator help turn rough text volume into practical cost estimates across prompts, completions, and model pricing tiers.

Start calculating your token costs for free ⇒

The Short Answer: What is 1 Million Tokens in AI?

In English, 1 token is roughly 0.75 words. That means 1 million tokens is about 750,000 words.

It also works out to roughly 4 to 5 million characters, around 1,500 to 2,000 pages of text, or about five average-length books. These comparisons are useful for planning, but they are still estimates. AI systems count tokens, not words or pages.

| Measure | Approximate equivalent |

|---|---|

| Tokens | 1,000,000 |

| Words | About 750,000 words |

| Characters | About 4,000,000 to 5,000,000 characters |

| Pages of text | About 1,500 to 2,000 pages |

| Books | Roughly 5 average books |

| Long classic comparison | About 1.3 copies of War and Peace |

The exact conversion depends on the model, tokenizer, language, formatting, punctuation, and content type. Plain English text is usually easier to estimate. Code, tables, technical strings, short chat messages, and heavily formatted documents can produce different token counts.

For quick planning, use 750,000 words as the working estimate for 1 million tokens. For pricing, use an actual token calculator, because AI providers bill by token usage rather than by word count.

Token to Word Conversion (The Quick Math)

The quick math is simple:

- 1 token is about 0.75 words in English

- 1,000 tokens is about 750 words

- 100,000 tokens is about 75,000 words

- 1,000,000 tokens is about 750,000 words

If you want the practical takeaway, 1 million tokens is a lot of text. It can cover multiple novels, a big support knowledge base, long chat histories, or a large amount of API input and output combined.

How many pages are 1 million tokens?

1 million tokens is roughly 1,500 to 2,000 pages of English text, depending on formatting, page size, font, spacing, and the type of content being processed.

The simplest way to estimate it is to start with the word count. If 1 million tokens equals about 750,000 words, and a standard page contains around 375 to 500 words, the result is roughly 1,500 to 2,000 pages.

That number changes quickly in real workflows. Dense technical documents, code, tables, transcripts, and heavily formatted files may use tokens differently from plain English prose. Short words, punctuation, headings, line breaks, and special characters can all affect the final token count.

For budgeting, treat the page estimate as a planning shortcut, not a billing rule. AI models do not charge by page. They charge by tokens. A 100-page PDF with dense formatting can cost more to process than a 100-page plain-text document, even if both look similar to a person reading them.

Why Do LLMs Price Models Per 1 Million Tokens?

LLMs are priced per 1 million tokens because a single token is extremely small from a billing point of view. The cost of one token is usually a fraction of a fraction of a cent, so quoting prices per token, or even per 1,000 tokens, can get messy fast.

The older per-1,000-token format worked when models were cheaper, simpler to compare, and used in smaller volumes. But as API usage scaled, that unit became less practical. Developers had to compare tiny decimal-heavy prices across multiple vendors, input rates, output rates, and context windows, which made budgeting harder than it needed to be.

Pricing per 1 million tokens fixes that problem. It turns microscopic usage into a meaningful commercial unit, one that maps better to real workloads like long document processing, chatbot traffic, code generation, batch analysis, or enterprise automation.

That is why the per-1M-token baseline is now common across major model providers such as OpenAI, Anthropic, and Google. It gives teams a cleaner way to compare models side by side and estimate spend without constantly converting tiny numbers.

In practice, this makes procurement and forecasting much more straightforward:

- Cleaner price comparisons: costs are easier to read across vendors

- Better budget planning: teams can estimate usage at a monthly or product level

- More realistic workload sizing: 1 million tokens is large enough to reflect actual application usage

- Less decimal noise: pricing becomes easier to explain internally to finance, product, and engineering teams

If you are trying to model real API spend, the unit matters less than the forecasting method. A free tool like AI Token Calculator helps by turning token estimates into readable cost projections across top models, so you can compare pricing without doing manual math every time.

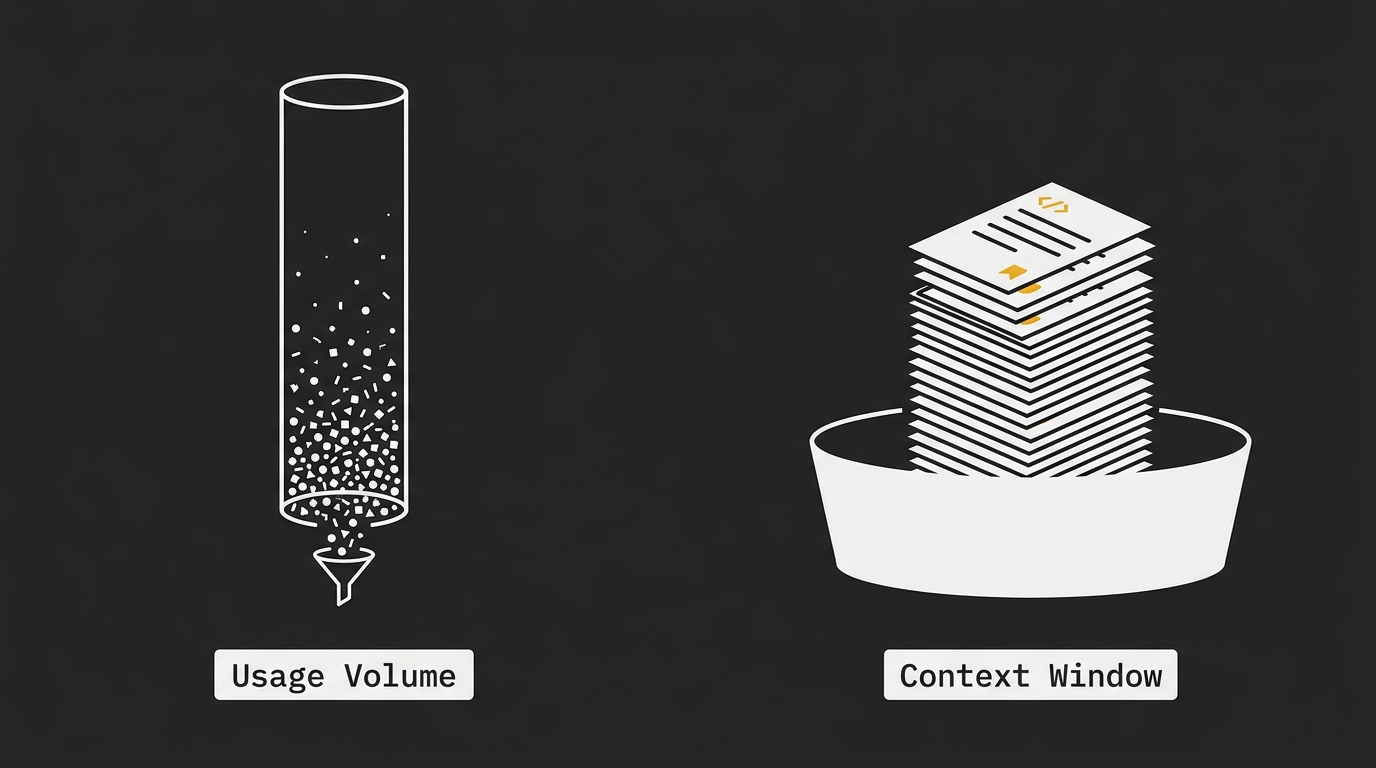

Context Window vs. Total Usage: Don’t Mix Them Up

These are two different things, and mixing them up causes a lot of pricing confusion.

A 1 million token context window means a model can accept up to that much text in a single request or active conversation state. 1 million tokens of usage means the total amount of input and output tokens you are billed for across however many API calls your app makes.

Think of it like this:

- Volume: your total token consumption over time

- Context window: the maximum amount of text the model can hold in one prompt or session

A large context window is a capability. A 1 million token usage figure is a billing quantity.

For example, an app might spend 1 million tokens across thousands of short chats without ever using a massive context window. On the flip side, a model like Gemini 3.1 Pro or Claude 4.6 Opus can read very large prompts at once, but doing that can consume a huge chunk of your token budget in a single interaction.

That is the key commercial point. Huge context windows are powerful, but they are not free. If you keep passing long PDFs, codebases, transcripts, or full conversation histories into the model, token usage climbs very fast because every request can include a large amount of text before the model even generates an answer.

Here is the clean distinction:

| Term | What it means | Billing impact |

| 1M token context window | The model can process up to 1 million tokens at once | Potentially very high per request |

| 1M tokens of usage | Your total billed token volume across calls | Spread across many requests or sessions |

In practice, this changes how you build:

- Short-chat app: usually burns tokens gradually across many small requests

- Long-document analysis tool: can burn tokens aggressively if full files are sent repeatedly

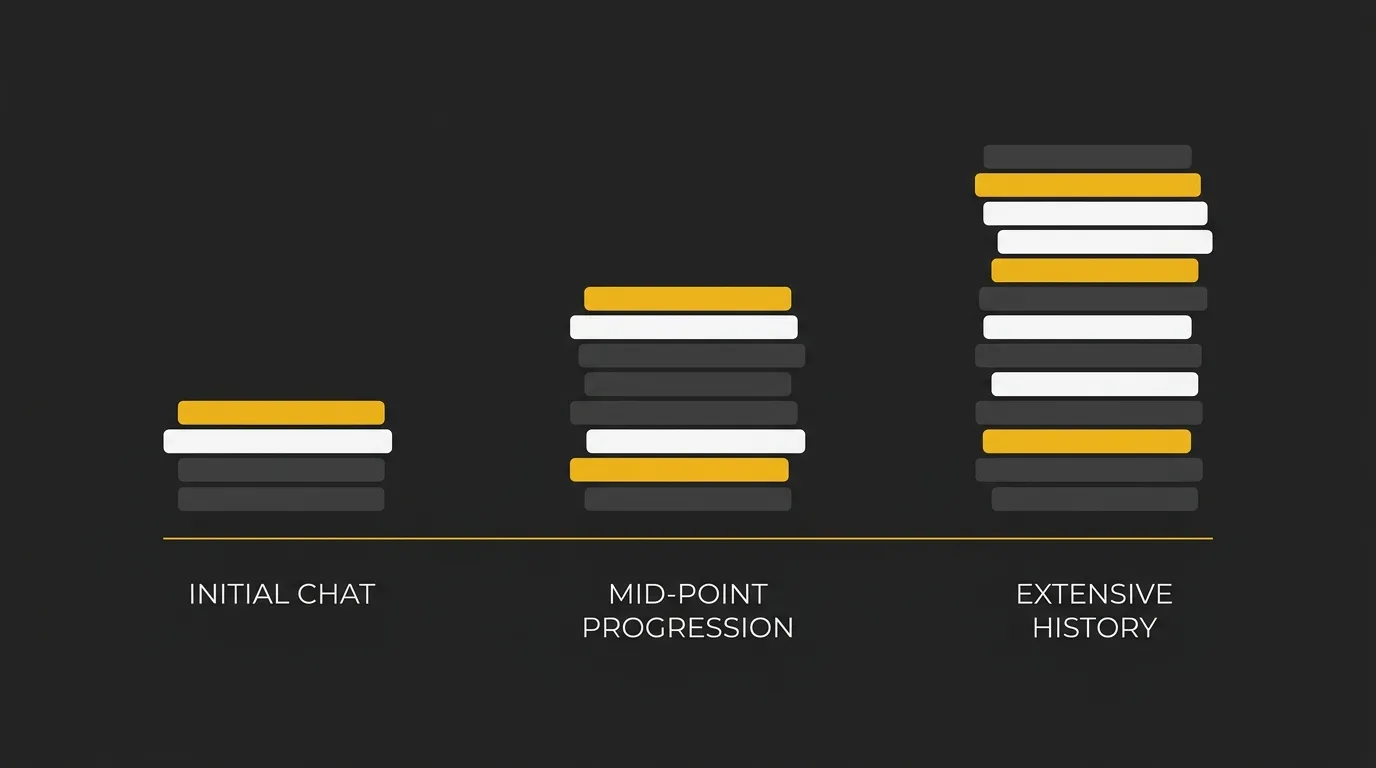

- Persistent chat with long history: each new turn can get more expensive if old messages remain in context

A big context window can improve product UX because you need fewer forced summaries and fewer hard resets. But it can also make token consumption less obvious, especially in long-running sessions where more history stays in play each turn.

The practical takeaway is simple: do not assume 1M context means 1M included usage. If your workflow keeps stuffing large amounts of text into prompts, you can burn through billed tokens surprisingly quickly. That is exactly where a tool like AI Token Calculator helps, because you can estimate the cost of large-context prompts before pushing them into production.

What Does 1 Million Tokens Actually Buy You?

1 million tokens is enough for a meaningful amount of work, not just a few prompts. In practical terms, it can cover large-batch jobs, high-volume content generation, or a decent chunk of ongoing app usage before you need to top up your budget.

The exact output depends on the model, the tokenizer, and how much of your usage is input versus output. But if you use the rough English conversion from earlier, 1 million tokens is about 750,000 words to work with.

Real-World Output Examples

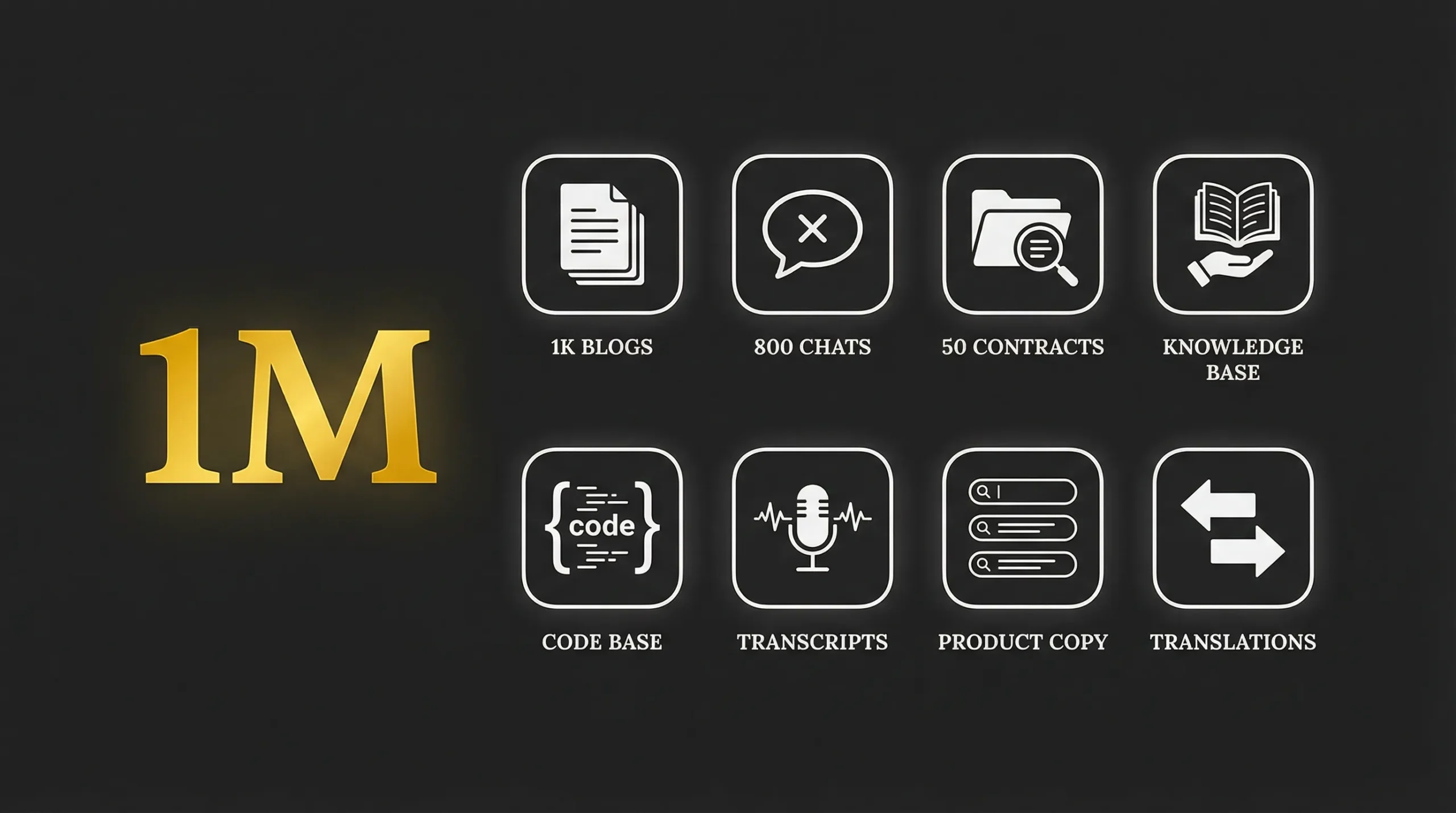

Here are concrete ways 1 million tokens might be used:

- Generate about 1,000 short blog posts: assuming each post is around 750 words.

- Run roughly 500 to 800 customer support conversations,depending on how long each chat is and how much prior history stays in context.

- Audit around 50 lengthy legal contracts: if the workflow is mainly document ingestion, extraction, and summarization.

- Process a large knowledge base migration: for example, feeding articles into an AI workflow for tagging, summarizing, or rewriting at scale.

- Analyze a sizable codebase in chunks: especially for review, documentation, refactoring suggestions, or file-by-file explanation.

- Summarize long meeting or call transcripts in bulk: useful for internal ops, sales calls, or support QA workflows.

- Power a document-heavy internal assistant for a limited period: where employees ask questions against uploaded manuals, SOPs, or policy files.

- Generate large volumes of product copy: such as descriptions, category text, metadata, and short-form support content across many SKUs.

- Classify or label large text datasets: including feedback tickets, survey responses, CRM notes, or moderation queues.

- Translate a substantial amount of plain-text content: if the workflow is mostly text in, text out, without heavy prompt overhead.

The important bit is this: 1 million tokens can disappear faster than expected when every request includes long prompts, long outputs, or repeated conversation history. The budget stretches much further when prompts are tight and the workflow is batch-optimized.

If you want to turn these examples into actual dollars, AI Token Calculator is useful because it lets you estimate cost by model instead of guessing from rough token math alone.

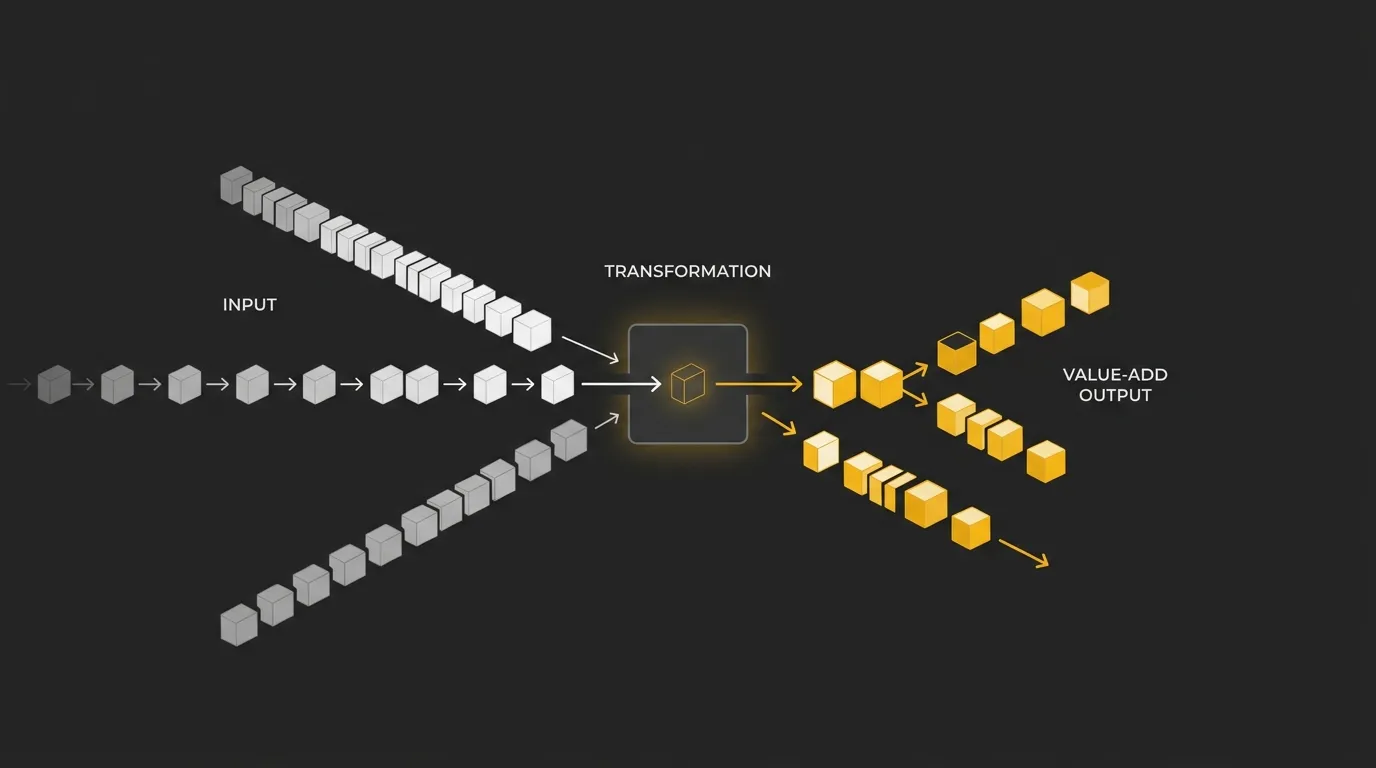

Input vs. Output Tokens: Understanding the Cost Split

Not all tokens cost the same. In most AI APIs, input tokens are cheaper than output tokens because reading text is computationally lighter than generating new text.

That pricing difference matters a lot when you model a 1 million token budget. If your workflow is mostly short prompts with long answers, costs can climb much faster than a workload built around large inputs and small responses.

A simple way to think about it:

- Input tokens: the text you send to the model, such as prompts, instructions, chat history, documents, or code

- Output tokens: the text the model generates back to you

Most providers charge roughly 3x to 5x more for output tokens than input tokens. The exact multiple depends on the model, but the pattern is common across the market: generation is the expensive part.

Here is why this catches people out. They estimate a project as 1 million tokens total, but they do not break down where those tokens sit.

| Usage mix | Cost impact |

| Mostly input, little output | Usually cheaper |

| Balanced input and output | Mid-range cost profile |

| Short input, heavy output | Usually more expensive |

| Repeated chat turns with growing history | Can get expensive quickly |

For example, these two workflows could both hit 1 million total tokens, but land at very different costs:

- Document classification: large inputs, short labels or summaries

- Long-form generation: short prompts, large generated responses

The second one is often more expensive because more of the budget sits in output tokens.

This is also why iterative back-and-forth work can burn tokens faster than expected. Every revision cycle adds more prompt history on the input side, then generates more output again. The total can snowball even if each individual turn feels small.

When you estimate spend, do not just ask how many tokens you will use. Ask how many will be input, how many will be output, and whether old conversation history is being resent each turn.

If you want a cleaner forecast, model both sides separately. AI Token Calculator is useful here because it lets you compare input and output pricing across top models instead of assuming 1 million tokens is one flat cost.

Next Steps: Forecast Your Token Costs

A rough word estimate is useful. A real cost forecast needs the token split.

Before you launch a chatbot, document workflow, content pipeline, or long-context AI feature, estimate how many tokens will be input, how many will be output, and which model will handle the workload. The same 1 million tokens can cost very different amounts depending on whether the usage is mostly reading, generation, or repeated chat history.

AI Token Calculator helps turn that estimate into a clear cost projection across top LLM models. Use it before you scale, not after the bill arrives.

Start calculating your token costs for free ⇒

FAQs About AI Tokens

How many characters are 1 million tokens?

Roughly 4 to 5 million characters in English. The exact number varies by language, formatting, punctuation, and the tokenizer the model uses.

How do you convert 1 million tokens to words?

Multiply the token count by 0.75 as a rough English estimate. That means 1 million tokens is about 750,000 words.

How much does 1 million tokens cost?

It depends on the model, ranging from about $0.15 for smaller models to $15 or more for frontier models. The final cost also depends on whether those tokens are input, output, or a mix of both.